Tech

Flutterwave Partners with EFCC to Establish Cybercrime Research Center in Nigeria

Nigerian fintech giant, Flutterwave, has partnered with the Economic and Financial Crimes Commission (EFCC) to create a Cybercrime Research Center in Nigeria. This initiative aims to combat internet crime, enhance transaction security, and provide sustainable opportunities for youths across the country.

Memorandum of Understanding (MoU)

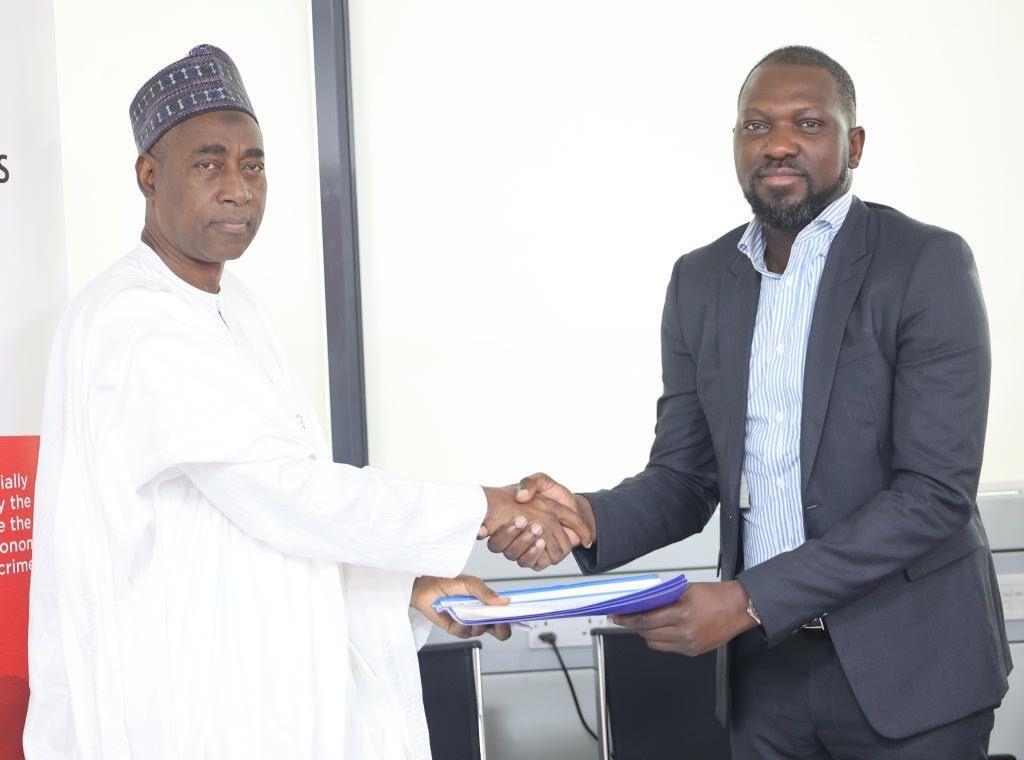

A Memorandum of Understanding was signed on June 14, 2024, by the Secretary of the EFCC, Mr. Mohammadu Hammajoda, and the CEO of Flutterwave, Olugbenga Agboola. This partnership marks a significant step in the fight against financial crimes and underscores the commitment of both parties to fostering a secure financial environment.

Objectives of the Cybercrime Research Center

The Cybercrime Research Center, to be established at the new EFCC Academy, will serve as a hub for advanced research, training, and capacity building. The center will focus on several key areas:

- Advanced Fraud Detection and Prevention

- Develop and implement cutting-edge technologies to detect and prevent financial fraud.

- Offer comprehensive training for law enforcement and industry professionals to effectively combat modern financial crimes.

- Collaborative Research and Policy Development

- Engage in joint research initiatives and policy formulation to enhance understanding and regulation of financial crime.

- Provide a platform for the exchange of ideas and best practices between the public and private sectors.

- Youth Empowerment and Capacity Building

- Provide high-end training and research opportunities for 500 youths, equipping them with the skills needed to navigate and excel in the digital economy.

- Technological Advancement and Resource Enablement

- Create a repository of advanced tools, technologies, and resources to support financial crime investigations.

- Develop protocols for addressing emerging threats, such as cryptocurrency-related crimes.

Statements from Key Stakeholders

Flutterwave’s CEO, Olugbenga Agboola, emphasized the company’s dedication to promoting secure transactions:

“This initiative underscores our commitment to creating a fraud-free financial ecosystem and leading the charge in safeguarding transactions across Africa. We applaud the EFCC’s relentless efforts to combat internet fraud and other illicit activities in the financial sector.”

EFCC Executive Chairman, Mr. Ola Olukoyede, expressed appreciation for the partnership:

“The EFCC is impressed with the strides and expanse of Flutterwave across Africa. This partnership marks a significant leap forward in our efforts to combat financial crimes and ensure a secure financial landscape for Nigerians. The Cybercrime Research Center will significantly enhance our capabilities to prevent, detect, and prosecute financial crimes.”

Importance of the Initiative

As the payments ecosystem evolves, financial fraud remains a significant challenge, threatening the stability and trust in financial systems. The partnership between Flutterwave and the EFCC exemplifies how public-private collaboration can address these issues, paving the way for a more secure and prosperous economy in Nigeria and across Africa.

The Cybercrime Research Center is poised to play a crucial role in enhancing the fight against financial crimes, ensuring safer transactions, and empowering the next generation with the necessary skills to thrive in the digital economy.

Tech

OpenAI Revises Pentagon AI Deal After Backlash Over Military Use

OpenAI says it is amending its recent agreement with the United States Department of Defense following criticism over the potential use of its technology in classified military operations.

Chief executive Sam Altman announced that the company will insert clearer restrictions into the contract, explicitly prohibiting the intentional use of its systems for domestic surveillance of US citizens and nationals.

The controversy emerged after tensions between OpenAI’s rival Anthropic and the Pentagon, related to concerns that Anthropic’s AI model, Claude, could be used for mass surveillance or in fully autonomous weapons systems.

In a statement over the weekend, OpenAI said its Pentagon agreement contained “more guardrails than any previous agreement for classified AI deployments”. However, Altman later acknowledged that the rollout of the deal had been rushed.

“The issues are super complex, and demand clear communication,” he wrote on social media, adding that the company had sought to de-escalate tensions but recognised that the announcement appeared “opportunistic and sloppy”.

Under the revised terms, intelligence agencies such as the National Security Agency would require additional contractual modifications before being permitted to use OpenAI systems.

The backlash has had measurable effects. Reports indicate that day-over-day uninstalls of the ChatGPT mobile app surged sharply following the announcement, while Anthropic’s Claude climbed to the top of Apple’s App Store rankings.

Anthropic’s model had previously been blacklisted by the administration of Donald Trump after the company refused to abandon a corporate principle barring the use of its technology in fully autonomous weapons. Despite that position, reports have since indicated that Claude was used in the US-Israel conflict with Iran shortly after the ban.

The Pentagon has declined to comment on its arrangements with Anthropic.

Tech

X to stop Grok AI from undressing images of real people

X has announced that its artificial intelligence tool, Grok, will no longer be able to edit images of real people to depict them in revealing clothing in jurisdictions where such activity is illegal, following widespread backlash over the misuse of sexualised AI deepfakes.

In a statement published on the platform, X said it had introduced new safeguards to prevent the Grok account from being used to manipulate photos of real individuals in a sexualised manner. “We have implemented technological measures to prevent the Grok account from allowing the editing of images of real people in revealing clothing,” the company said.

The move has been welcomed by UK authorities, who had previously raised concerns about the tool’s use. The UK government described the decision as a “vindication” of its calls for X to take stronger action to control Grok. Media regulator Ofcom also said the change was a “welcome development”, while stressing that its investigation into whether the platform breached UK laws is still under way.

“We are working round the clock to progress this and get answers into what went wrong and what’s being done to fix it,” Ofcom said, signalling continued scrutiny despite the latest measures.

Technology Secretary Liz Kendall welcomed X’s announcement but emphasised the need for accountability. She said she would “expect the facts to be fully and robustly established by Ofcom’s ongoing investigation”, underlining the government’s commitment to ensuring online safety rules are upheld.

However, campaigners and victims of AI-generated sexualised images say the decision has come after significant harm had already been caused. Journalist and campaigner Jess Davies, who was among women whose images were edited using Grok, described the changes as a “positive step” but said the feature should never have been permitted in the first place.

Tech

Alibaba Opens AI Video Generation Model for Free Use Globally

Chinese tech giant Alibaba has made its latest AI video generation models freely available worldwide, intensifying competition with rivals such as OpenAI.

The company announced on Wednesday that it is open-sourcing four models from its Wan2.1 series, its most advanced AI model capable of generating images and videos from text and image inputs. These models will be accessible via Alibaba Cloud’s Model Scope and Hugging Face, making them available to academics, researchers, and businesses globally.

Following the announcement, Alibaba’s Hong Kong-listed shares surged nearly 5%, continuing a strong rally that has seen the stock gain 66% in 2025. Investors have been optimistic about the company’s growing role in AI and its improving financial performance, buoyed by recent policy signals from Chinese President Xi Jinping supporting the domestic private sector.

Alibaba’s move aligns with a broader trend in China, where companies are increasingly embracing open-source AI. In January, DeepSeek, another Chinese firm, shook global markets by revealing that its AI model was trained at a fraction of the cost of competitors, using less-advanced Nvidia chips. Both Alibaba’s and DeepSeek’s models are open-source, meaning they can be downloaded and modified freely, unlike proprietary AI models such as those developed by OpenAI, which generate direct revenue.

The shift towards open-source AI has sparked debate over whether AI models will become commoditized. While companies like Meta are leading the open-source push in the U.S. with their Llama models, Chinese firms have been particularly aggressive in this space, aiming to drive innovation and build global AI communities.

-

News1 week ago

News1 week agoGas prices in UK and Europe soar after strikes on energy facilities in Qatar and Iran

-

Sports1 week ago

Sports1 week agoLang Confirms Successful Surgery After Injury in Champions League Clash

-

News1 week ago

News1 week agoChinese National and Kenyan Associate Charged Over Attempt to Smuggle 2,000 Ants

-

Sports1 week ago

Sports1 week agoMorocco Crowned AFCON 2025 Champions After CAF Overturns Final Result

-

Entertainment7 days ago

Entertainment7 days agoBodycam Footage of Justin Timberlake’s Drink-Driving Arrest Released

-

News6 days ago

News6 days agoThousands Evacuated as Hawaii Faces Worst Flooding in 20 Years

-

Entertainment4 days ago

Entertainment4 days agoOil Trades Surge Minutes Before Donald Trump Iran Announcement

-

General7 days ago

General7 days agoRobert Mueller, Former FBI Director and Trump-Russia Special Counsel, Dies at 81